$25.6 Million Deepfake Fraud Highlights Urgent Need for AI-Resistant Security Frameworks in 2025

San Francisco, CA – October 5, 2025 –

The cybersecurity landscape has fundamentally transformed in 2025, with artificial intelligence-powered attacks reaching unprecedented levels of sophistication and scale. New research reveals that AI-driven phishing attacks have surged by an alarming 1,265%, while deepfake fraud incidents have resulted in losses exceeding $25.6 million in single attacks. As cybercrime costs are projected to reach $10.5 trillion annually by 2025, organizations worldwide are scrambling to implement AI-resistant security frameworks to combat this evolving threat landscape.

The emergence of AI as both a weapon and shield in cybersecurity has created what experts are calling an “AI arms race,” fundamentally altering how organizations approach digital security. Traditional security measures that relied on human intuition and signature-based detection are proving inadequate against AI-generated threats that can adapt, evolve, and scale at machine speed.

The AI Threat Explosion: By the Numbers

The statistics surrounding AI-powered cyberthreats paint a sobering picture of the current security landscape. According to the latest cybersecurity intelligence reports, the transformation has been both rapid and devastating:

Phishing Evolution

AI-generated phishing emails now achieve a 60% success rate compared to just 12% for traditional attempts. The sophistication of these attacks has eliminated the telltale signs that security training programs have historically taught users to identify, such as poor grammar and generic messaging.

Deepfake Proliferation

The first quarter of 2025 alone recorded 179 deepfake incidents, surpassing the total for all of 2024 by 19%. These attacks have moved beyond simple audio manipulation to include sophisticated video conferences featuring multiple AI-generated participants.

Malware Evolution

Polymorphic malware, enhanced by AI capabilities, now appears in 76.4% of all phishing campaigns. These threats can generate new, unique versions of themselves every 15 seconds, making traditional signature-based detection obsolete.

“We’re witnessing a fundamental shift in the threat landscape,” said Dr. Sarah Chen, Chief Security Officer at CyberDefense Analytics.

“AI has democratized advanced attack techniques that were previously available only to nation-state actors. Now, any cybercriminal with $50 can access sophisticated AI-powered attack tools.”

The $25.6 Million Wake-Up Call: Arup Deepfake Fraud

The most shocking demonstration of AI’s potential for cybercrime occurred at global engineering firm Arup, where sophisticated deepfake technology enabled fraudsters to steal $25.6 million in a single, coordinated attack.

The attack began with a seemingly routine email from the company’s CFO requesting an urgent financial transaction. When the targeted finance employee expressed initial skepticism, they were invited to a video conference to discuss the matter. During the call, the employee saw and heard what appeared to be the CFO and several colleagues discussing the confidential transaction.

Unbeknownst to the victim, every person on the call except themselves was an AI-generated deepfake. The visual and auditory evidence was so convincing that the employee proceeded to make 15 separate transfers, ultimately sending $25.6 million to the fraudsters.

“This wasn’t a traditional hack,” explained Arup’s Global CIO in a post-incident analysis. “It was technology-enhanced social engineering that exploited our most fundamental human instincts to trust what we see and hear.”

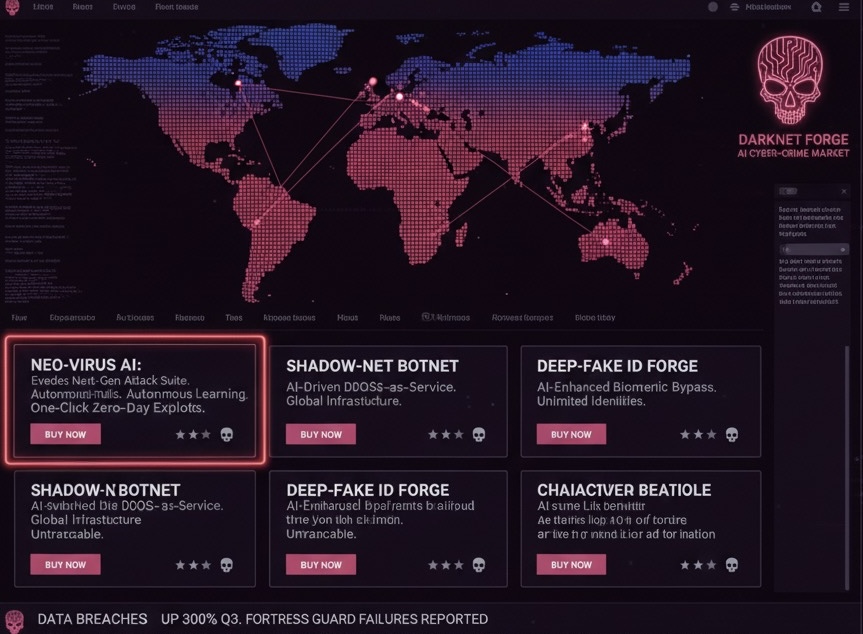

The Democratization of Advanced Cyber Weapons

Malicious AI platforms like WormGPT and FraudGPT, available on dark web forums, have eliminated the technical barriers that previously limited sophisticated attacks to skilled hackers.

These “crime-as-a-service” platforms can generate convincing Business Email Compromise (BEC) messages, create polymorphic malware, and even provide step-by-step attack guidance. The cost barrier has plummeted, with advanced attack kits available for as little as $50.

“The democratization of AI attack tools represents a paradigm shift in cybersecurity,” noted Dr. Marcus Rodriguez, cybersecurity researcher at MIT.

“We’re no longer dealing with a small number of highly skilled adversaries, but potentially millions of threat actors armed with AI-enhanced capabilities.”

Defensive AI: Fighting Fire with Fire

Behavioral Analytics Revolution

Modern AI security systems focus on behavioral anomalies instead of signature-based detection. These systems learn normal behavior for users and systems, then flag deviations as potential compromises.

Predictive Threat Intelligence

AI-powered platforms now analyze vast amounts of data to predict future attacks, helping organizations anticipate vulnerabilities before they’re exploited.

Automated Response

Security Orchestration, Automation, and Response (SOAR) platforms can now execute complex mitigation playbooks in seconds, dramatically reducing breach lifecycles.

According to IBM’s 2025 Cost of a Data Breach Report, organizations using AI and automation extensively reduce breach costs by $1.9 million and shorten breach lifecycles by 80 days.

Conclusion: Navigating the AI Security Paradigm

The surge in AI-powered cyber attacks represents a fundamental shift in cybersecurity. The 1,265% increase in AI-driven phishing and the $25.6 million Arup fraud demonstrate that traditional approaches no longer suffice.

Success in this new environment requires embracing AI-powered defense tools and robust governance frameworks. Those that adapt will thrive; those that don’t may face catastrophic consequences.

The time for action is now the cost of inaction in the face of AI-powered threats is simply too high to ignore.

About TechTrib: TechTrib is a leading technology news platform providing comprehensive coverage of cybersecurity, artificial intelligence, and emerging technology threats. Visit techtrib.com.

Contact Information:

Email: news@techtrib.com

Twitter: @TechTribNews

LinkedIn:@TechTrib

Disclaimer: This article is for informational purposes only and should not be considered security advice. Organizations should consult qualified cybersecurity professionals when implementing AI security measures.